Harness the Potential of AI Tools with ChatGPT. Our blog offers comprehensive insights into the world of AI technology, showcasing the latest advancements and practical applications facilitated by ChatGPT’s intelligent capabilities.

Convolutional Neural Networks are today’s building blocks for image classification tasks using machine learning. However, another very useful task they perform before classification is to extract relevant features from an image. Feature extraction is the way CNNs recognize key patterns of an image in order to classify it. This article will show an example of how to perform feature extractions using TensorFlow and the Keras functional API. But first, in order to formalize these CNN concepts, we need to talk first about pixel space.

Pixel space

Pixel space is exactly what the name suggests: it is the space where the image gets converted into a matrix of values, where each value corresponds to an individual pixel. Therefore, the original image that we see, when fed into the CNN, gets converted into a matrix of numbers. In grayscale images, these numbers typically range from 0 (black) to 255 (white), and values in-between are shades of gray. In this article, all images have been normalized, that is, every pixel has been divided by 255 so its value lies in the interval [0, 1].

CNN and pixel space

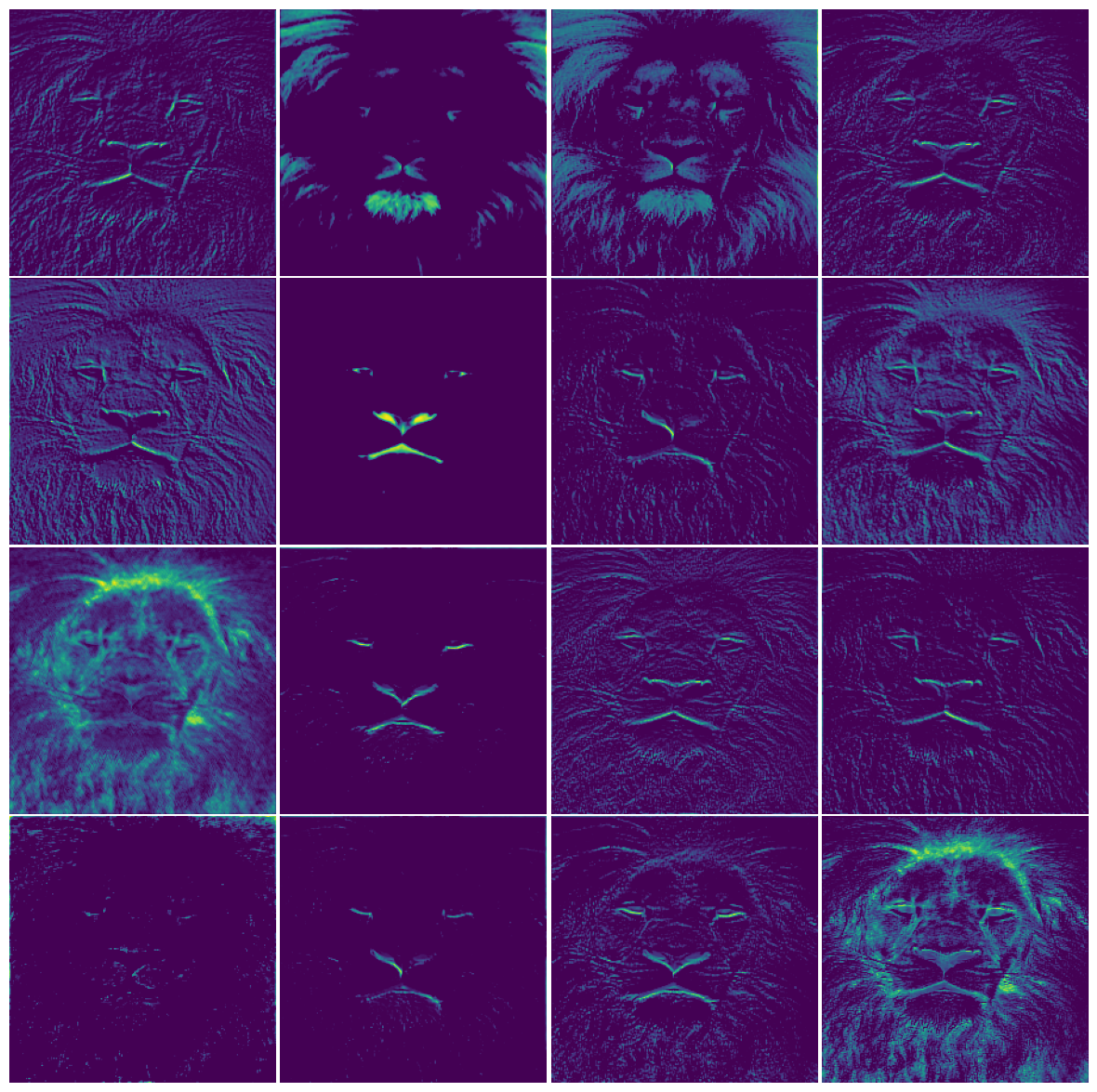

What a CNN does with the image in pixel representation is to apply filters and to process it in order to extract relevant pixels to make the final “decision”, which is to put that image within a class. For example, in the image at the top of the page, that CNN paid a lot of attention to the lion’s mouth, tongue, eyes (and strong contours in general), and these features are further extracted as we go deeper into the neural network. Therefore, it suffices to say that, the more specialized a CNN is in terms of classification, the more professional it is in recognizing key features of an image.

The goal

With that being said, the goal is simple: to see the level of specialization of a CNN when it comes to feature extraction.

The Method

To do this, I trained two CNNs with the same architecture, but with different training sizes: one with 50K images (this is the benchmark, the smart one), and the other with 10K images (this is the dummy one). After that, I sliced up the layers of the CNN to check what the algorithm is seeing and the sense it makes of the image fed into it.

Dataset

The dataset used for this project was the widely used cifar10 image dataset [1], a public domain dataset, which is a 60K image base divided into 10 classes, of which 10K images are used as hold-out validation set. The images are 32×32 pixels in size, and they are RGB-colored, which means 3 color channels.

In order to prevent data leak, I kept one image to use as a test image in feature recognition, therefore this image was not used in either of the trainings. I present you our guinea pig: the frog.

The implementation is shown in the code snippet below. To properly slice the layers of the CNN it is necessary to use the Keras functional API in TensorFlow instead of the Sequential API. It works as a cascade, where the next layer is called over the last one.

import tensorflow as tf

from tensorflow.keras.models import Model

from tensorflow.keras.layers import Input, Conv2D, MaxPool2D, Dense, Dropout, Flatten

from tensorflow.keras.callbacks import ModelCheckpoint, EarlyStoppingdef get_new_model(input_shape):

'''

This function returns a compiled CNN with specifications given above.

'''

#Defining the architecture of the CNN

input_layer = Input(shape=input_shape, name='input')

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_1')(input_layer)

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_2')(h)

h = MaxPool2D(pool_size=(2,2), name='pool_1')(h)

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_3')(h)

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_4')(h)

h = MaxPool2D(pool_size=(2,2), name='pool_2')(h)

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_5')(h)

h = Conv2D(filters=16, kernel_size=(3,3),

activation='relu', padding='same', name='conv2d_6')(h)

h = Dense(64, activation='relu', name='dense_1')(h)

h = Dropout(0.5, name='dropout_1')(h)

h = Flatten(name='flatten_1')(h)

output_layer = Dense(10, activation='softmax', name='dense_2')(h)

#To generate the model, we pass the input layer and the output layer

model = Model(inputs=input_layer, outputs=output_layer, name='model_CNN')

#Next we apply the compile method

model.compile(optimizer='adam',

loss='categorical_crossentropy',

metrics=['accuracy'])

return model

The specs of the architecture are shown below in Fig. 1.

The optimiser used is Adam, the loss function was categorical cross-entropy, and the metric used for evaluation was simply accuracy, since the dataset is perfectly balanced.

Now we can slice some strategical layers of the two CNNs in order to check the processing level of the images. The code implementation is shown below:

benchmark_layers = model_benchmark.layers

benchmark_input = model_benchmark.inputlayer_outputs_benchmark = [layer.output for layer in benchmark_layers]

features_benchmark = Model(inputs=benchmark_input, outputs=layer_outputs_benchmark)

What is happening here is as follows: the first line access each layer of the model and the second line returns the input layer of the whole CNN. Then in the third line we make a list showing the outputs of each layer, and finally, we create a new model, whose outputs are the outputs of the layers. This way we can take a look at what is happening in-between layers.

A very similar code was written to access the layers of our dummy model, so it will be omitted here. Now let’s proceed to look at the images of our frog, processed within different layers of our CNNs.

First convolutional layer

Dummy

Fig. 2 shows the images of the 16 filters of the convolutional layer (conv2d_1). We can see that the images are not super processed, and there is a lot of redundancy. One could argue that this is only the first convolutional layer, which accounts for the fact that the processing isn’t so heavy, and that’s a fair observation. In order to tackle this, we shall look at the first layer of the benchmark.

Benchmark

The benchmark classifier shows a much more processed image, to the point where most of these images are not recognizable anymore. Remember: this is only the first convolutional layer.

Last convolutional layer

Dummy

As expected the image is not recognizable anymore, since we have gone through 6 convolutional layers at this point, and 2 pooling layers, which explains the lower dimensions of the images. Let’s see what the last layer of the benchmark looks like.

Benchmark

This is even more processed, to the point where the majority of pixels are black, which shows that the important features were selected, and the rest of the image is basically thrown away.

We can see that the processing degrees are very different for the same slice of the network. Qualitative analysis indicates that the benchmark model is more aggressive when it comes to extracting useful information from the input. This is particularly evident from the first convolutional layer comparison: the frog image output is much less distorted and much more recognizable on the dummy than it is on the benchmark model.

This suggests that the benchmark is more efficient at throwing away elements of the image that are not useful when predicting the class, while the dummy classifier, uncertain of how to proceed, considers more features. We can see in Fig. 6 that the benchmark (in blue) throws away more color pixels than the dummy model (in red), which shows a longer tail in its distribution of colors.

If we take a look at the pixel distribution of our original frog image, we have Fig. 7, which shows a much more symmetric distribution, centered more or less around 0.4.

From an information theory standpoint, the differences in the probability distributions from the original image and the resulting images after the convolutional layers represent a massive information gain.

Looking at Fig. 6 and comparing it with Fig. 7, we are much more certain of which pixels we are going to find in the former than in the latter. Hence, there is a gain of information. This is a very brief and qualitative exploration of Information Theory and opens up a doorway to a vast area. For more information about Information (pun intended), see this post.

And finally, a way to look at the uncertainty in the answer of the classifiers is to look at the probability distribution over the classes. This is the output of the sofmax function, at the end of our CNN. Fig. 8(left) shows that the benchmark is much more certain of the class, with a distribution peaked on the frog class; while Fig. 8(right) shows a confused dummy classifier, with the highest probability in the wrong class.

Discover the vast possibilities of AI tools by visiting our website at

https://chatgptoai.com/ to delve deeper into this transformative technology.

Reviews

There are no reviews yet.